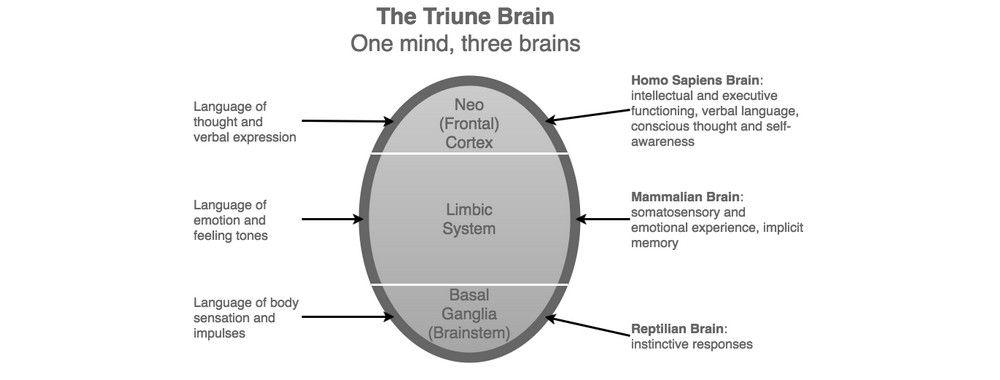

In the 1960s, American neuroscientist Paul MacLean formulated the ‘Triune Brain’ model, which is based on the division of the human brain into three distinct regions. MacLean’s model suggests the human brain is organized into a hierarchy, which itself is based on an evolutionary view of brain development. The three regions are as follows:

- Reptilian or Primal Brain (Basal Ganglia)

- Paleomammalian or Emotional Brain (Limbic System)

- Neomammalian or Rational Brain (Neocortex)

At the most basic level, the brainstem (Primal Brain) helps us identify familiar and unfamiliar things. Familiar things are usually seen as safe and preferable, while unfamiliar things are treated with suspicion until we have assessed them and the context in which they appear. For this reason, designers, advertisers, and anyone else involved in selling products tend to use familiarity as a means of evoking pleasant emotions.